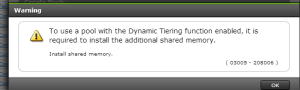

Quick post here! If your setting up a new Hitachi H800 (G400/600) and are trying to setup a Hitachi Dynamic Tiering pool you may get the following error. “To use a pool with the Dynamic Tiering function enabled, it is required to install additional shared memory.”

You will need to login to the maintenance utility (This is what runs on the array directly). Here is the procedure.

The first step is figuring how much memory you need to reconfigure. This will be based on how much capacity is being dedicated to Dynamic Provisioning Pools. As the documents reference Pb (little b which is a bit odd) these numbers are smaller than they first appear.

- No Extension DP – .2Pb with 5GB of Memory overhead

- No Extension HDT – .5Pb with 5GB of Memory overhead

- Extension 1 – 2Pb with 9GB of Memory overhead

- Extension 2 – 6.5Pb with 13GB of Memory overhead

There are also extensions 3 and 4 (which use 17GB and 25GB respectively) however I believe they are largely needed for larger Shadow Image, Volume Migrations, Thin Image, and TrueCopy configurations.

In the Maintenance Utility window, click Hardware > Controller Chassis. In the Controller Chassis window, click the CTLs tab. Click Install list, and then click Shared Memory. In the Install Shared Memory window pick which extensions you need and select install (and grab a cup of coffee because this takes a while). This can be done non-disruptively, but it would be best to do at lower IO as your robbing cache from the array for the thin provisioning lookup table.

You can find all this information on page 171 of the following guide.