PSA: Developers and SQL admins do not understand storage

Thin Provisioning is one of my favorite technologies, but with all great technology comes great responsibility.

This afternoon I got a call from a customer having an issue with a SQL backup. They were preparing a major code push and were running a scripted full SQL backup to have a quick restore point if something goes wrong.

I was sent the following

10 percent processed.

20 percent processed.

30 percent processed.

40 percent processed.

50 percent processed.

60 percent processed.

70 percent processed.

80 percent processed.

90 percent processed.

Msg 64, Level 20, State 0, Line 0

A transport-level error has occurred when receiving results from the server. (provider: TCP Provider, error: 0 - The specified network name is no longer available.)

The server had frozen from a thin provisioning issue, but tracing through the workflow that caused this highlighted a common problem of SQL administrators everywhere. Backups where being done to the same volume/VMDK as the actual database. For every 1GB of SQL database there was another 10GB of backups, wasting expensive tier 1 storage.

The Problem:

SQL developers LOVE to make backups at the application level that they can touch/see/understand. They do not trust your magical Veeam/VDP-A. Combined with NTFS being a relatively thin unfriendly file system (always writing to new LBA’s when possible) this means that even if a database isn’t growing much, if backups get placed on the same volume any attempt at being thin even on the back end array is going to require extra effort to reclaim. They also do not understand the concept of a shared failure domain, or data locality. If left to their own devices put the backups on the same RAID group of expensive 15K or flash drives, and go so far as to put it on the same volume/VMDK even if possible. Outside of the obvious problems for performance, risk, cost, and management overhead, this also means that your Changed Block Tracking and backup software is going to be baking up (or having to at least scan) all of these full backups every day.

The Solution:

Give up on arguing with them that your managed backups are good enough. Let them have their cake, but at least pick where the cake comes from and goes.

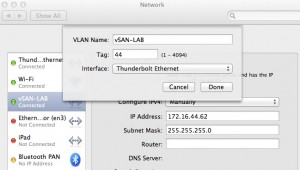

Create a VMDK on a separate array (in a small shop something as cheap as a SATA backed Synology can provide a really cheap NFS/iSCSI target for this). Exclude this drive from your backups (or adjust when it runs so it doesn’t impact your backup windows).

Careful explain to them that this new VMDK (name the volume backups) is where backups go.

Now accept that they will ignore this and keep doing what they have been doing.

In Windows turn on file screens and block the file extensions for SQL backup files.

Next turn on reporting alerts to email you anytime someone tries to write such a file, so you’ll be able to preemptively offer to help them setup the maintence jobs so they will work.